Sign up for the Slatest to get the most insightful analysis, criticism, and advice out there, delivered to your inbox daily.

On Thursday morning, Disney made two significant moves that indicate how the titanic entertainment brand will handle the artificial intelligence future—and they’re a bit confused, contradictory, and highly concerning.

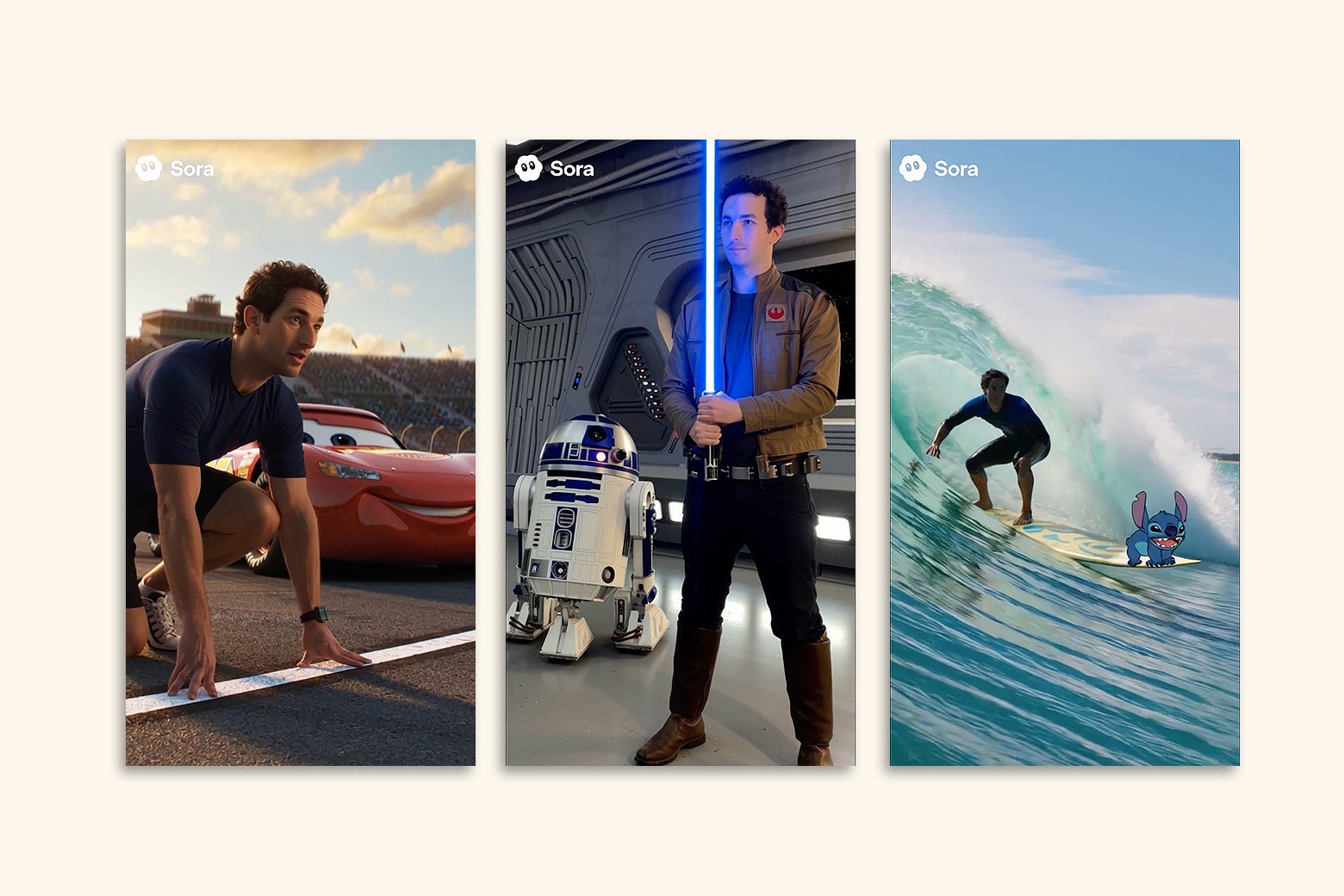

First, the ChatGPT-maker OpenAI announced on its website it was earning a $1 billion equity investment from Disney, as part of a three-year licensing agreement that would bring “more than 200 animated, masked and creature characters from Disney, Marvel, Pixar and Star Wars” to the visual-generation tools Sora and ChatGPT Images. (Ironically, the Silicon Valley firm’s Dall-E image generator, named in part after Disney’s iconic postapocalyptic trash-cleaning robot, is not mentioned within the release.) For one year, the deal will allow an exclusive window for those products’ subscribers to prompt up “social videos” with these specific characters, including (but hardly limited to) Mickey and Minnie Mouse, Moana, the Black Panther, and Darth Vader. Notably, while their A.I. versions will also get some props (e.g., Vader’s lightsaber), they will not be given the voices of their original actors (e.g., James Earl Jones’ booming baritone).

A “selection of these fan-inspired Sora short-form videos” will also be viewable on Disney+, in a breakthrough that OpenAI’s chief marketing officer compared, on LinkedIn, to the introduction of sound to cinema. It also fulfills Disney CEO Bob Iger’s November pledge to investors that the streamer will allow its paying customers to “create user-generated content and to consume user-generated content—mostly short-form—from others.” (At the time, the A.I. company that would facilitate this went unnamed; now we know it was OpenAI.) Another plank of Thursday’s arrangement mentions that ChatGPT will be “deployed” to Disney employees; just hours later, OpenAI followed up by rolling out its newest large language model update, GPT-5.2, which will likely be offered to those workers for the sake of assisting with “professional knowledge work” (and also come with an “Adult Mode” at some point next year).

Right as Disney and OpenAI hard-launched their relationship, the former also kicked off a legal brawl with a different Big Tech company, sending a cease-and-desist letter to Google for “unauthorized exploitation of Disney’s copyrighted works” on a “massive scale”—accusing the Big Tech giant of not just using Disney works to train A.I. sans permission, but also embedding that allegedly infringing A.I. across parent company Alphabet’s mass suite of subsidiaries (e.g., YouTube, a platform that Disney just battled over the fraught issue of intellectual property and carriage rights). This isn’t the first time Disney has done this: It previously got the controversial startup Character.ai to take down custom chatbots explicitly modeled after its characters, and it sent a cease-and-desist to Meta back in May, following reports that the social media giant’s Disney-character A.I. bots (e.g., Anna from Frozen) could engage in “sexually explicit” conversations with underage users. Additionally, Disney and Universal Studios jointly filed a June lawsuit against Midjourney for generating near-exact replicas of their fictional icons.

One might think Disney would also be concerned with the potential for its biggest names to be exploited in a rather … suggestive manner on Sora 2, especially since the app was able to generate many Disney-owned characters during its October rollout, and had initially forced rightsholders to actively “opt out” of Sora operability. Even beyond that exclusive app, deepfake sexual content has saturated popular short-form video platforms like TikTok, as CNN Business recently reported. (And that’s just the digital realm; we’ve also got “A.I.-powered” kids’ toys that talk about sex, because this is The Future or something.)

Per OpenAI’s press release, the company is “committed” to “including age-appropriate policies” and “maintaining robust controls to prevent the generation of illegal or harmful content.” Naturally, any lay observer who’s read the extensive reporting into how OpenAI has lessened ChatGPT’s safety guardrails—even as hundreds of thousands of users continue to report real-life harms—may be skeptical of this promise. Not to mention, if both OpenAI CEO Sam Altman and President Donald Trump get their preferred regulations in place, there will be little legal recourse for IP exploitation, deepfake sexual content, or IP-exploiting sexual content: On Thursday night, Trump signed a long-threatened executive order aimed at overriding all state-level A.I. regulations in favor of a single, unifying federal standard, a measure that has been supported by Altman. While the EO does not have the binding force of a congressional law and claims not to be targeting local child-safety protections, the intent is pretty clear: to preempt all seemingly “onerous” A.I. laws passed in states like California, where Disney is headquartered and where Altman has himself fought against bills that would have restricted A.I.-product access to minors.

Thursday morning also saw the governor of New York—where Disney does plenty of business—sign bills requiring the film, TV, and ad industries to publicly disclose any planned use of “synthetic figures” and to obtain explicit consent from the estates of dead celebrities to re-create their likenesses. Partnerships with Disney executives and the federal government may prove useful to OpenAI in dancing around such rules.

Bob Iger isn’t sweating any of it. He made a joint appearance with Altman on CNBC Thursday morning, where he added that Sora subscribers will also put likenesses of themselves into scenes like “that one lightsaber fight from Star Wars.” (You know—that one.) Iger insisted that “this does not in any way represent a threat to the creators at all … in part because there’s a license fee associated with it.” The nature of how this fee will be paid, or who would benefit, was not made clear.

“OpenAI is both respecting and valuing our creativity, both our characters, but also those that have created those characters,” Iger added, noting that this partnership was coming from a spirit of technological embrace similar to Disney’s early embrace of the iTunes Store. “No human generation has ever stood in the way of technological advance, and we don’t intend to try.”

That’s a weird way of characterizing Disney’s strategy when it appears to be fighting with every tech company except OpenAI. It’s also all the more eyebrow-raising when you remember how infamous the House of Mouse has been in shielding its treasured IP: suing craftspeople who use any Disney characters or resemblances thereof on their creations, plundering the landscape of Baby Yoda knockoffs on Etsy, lobbying to amend federal copyright law so as to keep Mickey Mouse, specifically, out of the public domain. That being said, as Steamboat Willie (specifically) was nearing his public domain debut, Disney’s famed aggression could not keep up with the barrage of autogenerated images featuring Mickey doing 9/11.

All of which is to say: Expect to see another incarnation of that on Sora 2 or Disney+ sooner than later, especially as Iger’s pal Altman keeps working with Trump to bulldoze A.I. protections for both kids and creatives, and as the House of Mouse attempts to confront A.I. firms while also appearing to embrace the future. It’s a mess, and by the looks of it, the fallout will be yet another slopfest on your streaming feed.